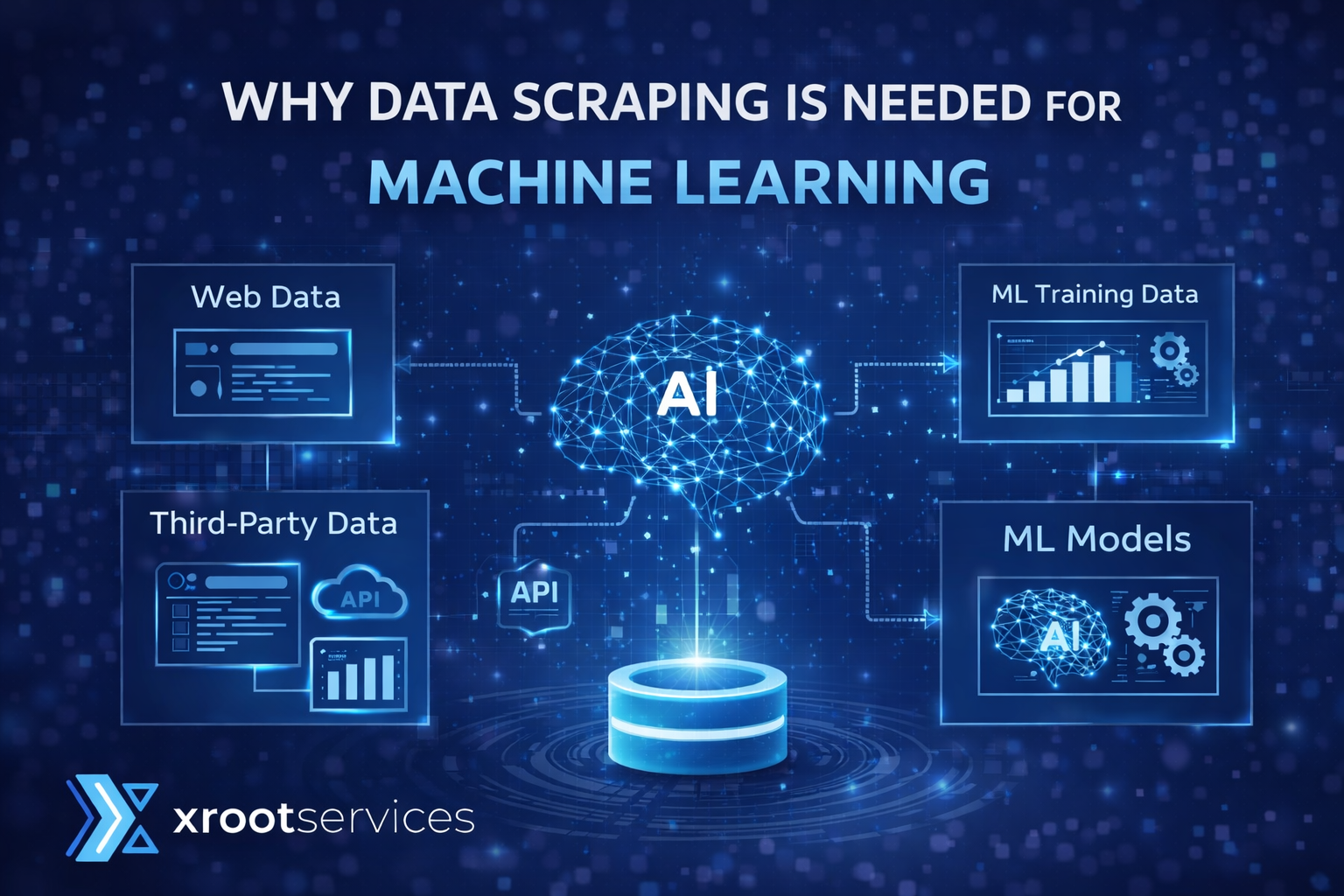

Why Data Scraping is Needed for Machine Learning

Artificial Intelligence, or we can say AI, has become an essential part of the world. There is not even a single day when you directly or indirectly use a byproduct of AI, from social media algorithms to e-commerce platform recommendations, everything has a part of AI in it. Even today, the highly used technique is using AI to create self-healing bots to have uninterrupted scraping data every single minute.

But have you ever wondered how that machine suddenly became smart, what they learn and how they learn it? Can we a normal humans, train a machine so that it works just the way we want or not?

Well, the answer is no, unfortunately, the process of creating AI and training need huge amount of data, the data which a normal human can’t create in decades, that’s the reason you can’t train a machine manually. But then how are these machine are trained, you must be thinking. Well, that’s where data scraping or web scraping comes into the picture, and in this blog we will discuss about the how web scraping is an essential part of AI creation and why it is important.

Excited? Let’s get started !!!

Table of Content

1. How Web Scraping Helps in Machine Learning?

2. Conclusion

How Web Scraping Helps in Machine Learning?

When we talk about creating a smart tool, one thing that comes to every developer’s mind is training that bot to be intelligent and can make smarter choices for the cases that it has gone through and solve and also on the cases that are new by understanding the pattern and nature of the problem. But it is only possible when there are enough cases for the same, as well as a constant supply of high-quality data, that too in huge amounts.

And for collecting that kind of data, many industries use web scraping, as web scraping just collects the data, it also ensures that the data is well structured and clean to retain its quality and reliability, so that it can be fed to the bot for better results. It is important to understand that the quality of data as well as the quantity are both non-negotiable factors, because if even any one of the factors is compromised, there are chances for underfitting or overfitting the bot.

Now you must be thinking what is underfitting and overfitting in machine learning, how does it affects the bot during the time of training, as well as after that. So let’s understand it in detail.

Underfitting

Underfitting of the bot happens when it neither perform competitive during the time of training nor when the bot is launched. In this, the bot won’t be able to solve even simple problems as they are too complex for him, and even if he did, it wouldn’t be correct.

In other words, we can say that these bots are way too simple for the world’s issues, and even the simple problem of real-time will be too complex for these bots, due to which they lose their popularity from the start.

This happens when the training data, which is being provided by the bot, is very small in quantity, as well as it’s very simple, due to which the bot doesn’t get enough data to understand the patterns, and that’s one of the reason it is unable to create the patterns during the real time which affects its accuracy and overall performance.

Overfitting

It is the problem that many businesses face after the bots are launched or their products are aired. In overfitting, what happens is the bot performs exceptionally well during the test cases of training, but the result isn’t the same when it’s being exposed to the real-time problem.

This happens when the bot is too complex, and the data is of low quality or very simple. When we are talking about low quality of data, we can say that it is those data which are either repeated, irregular, noisy or unstructured, due to which the bot will not be able to understand the concept or be able to recognize patterns as effectively as it should, as the data is very simple for that feature.

So, after talking about the outcomes of providing less data of high quality or providing huge data of low quality, both will result in an outcome that nobody desires. So it is important for the developer to understand that they need to balance this with providing a huge amount of data that is of high quality, otherwise it won’t work.

If you really wanted to scrape this amount of data on your own, then you can learn how to scrape with AI, so that you can automate your process of scraping as well as increase the calibre of your scraper. To know more about web scraping automation, do read this article next. XRootServices

Conclusion

Today, every business needs the power of AI, regardless of whether it’s the business of influencing, manufacturing or retailing, and you will suddenly inject a booster into business with not just a tool but intelligence tool. These tools not only complete your work efficiently and accurately but also know the right moments to take action, like if the market is in your favor then you can increase your product market with repricing tools, and when your market crashes, the tool can reduce the prices to compete with other competitors.

But as we discussed all these bots and tools need to be trained for it to make effective decisions without any help from the humans or human intervention, and for that they needs the cases and test data to ensure so that the bots are prepared for every possible case which can occur in future and they know how to response and for that we need web scraping, because without web scraping it is next to impossible to collect this amount of data manually and that too daily.

So if you are a company or individual who is planning to make a bot for yourself or for the business, you will need web scraping, regardless of whether you want to have it or not.

So, be smart and choose one of the top leading web scraping companies. XRootServices